Compliance Risk Management: Why Over-Governance in AI Is as Risky as No Governance

CTOs often see compliance risk management as a way to protect their businesses from operational, legal, and regulatory threats. However, excessive controls can be counterproductive in the AI era.

As businesses advance in AI adoption, they often swing between excessive regulation and unchecked experimentation. Both approaches are risky. Over-regulation stifles innovation and drives teams to use unapproved AI, while lax controls can lead to regulatory violations.

Choosing between speed and control isn’t the real problem facing tech leaders. It involves creating flexible, well-balanced compliance systems that are integrated into daily tasks.

This article explores how modern compliance risk management must evolve for AI-driven enterprises. shifting from rigid control models to adaptive, risk-based governance embedded in technology workflows.

Compliance risk management: When compliance becomes the bottleneck?

In highly regulated sectors, governance, risk management, and compliance processes are typically designed for static systems. AI, by contrast, learns, adapts, and interacts across workflows.

When organizations apply legacy approval structures to AI projects, several problems emerge:

- Overly long AI approval workflows delay deployment.

- Developers bypass formal review channels.

- Business teams experiment with external tools outside IT oversight.

- Shadow AI risks grow silently.

Excessive control can weaken AI security. When teams feel restricted, they continue to innovate, but outside official processes.

This is where shadow AI vs shadow IT becomes relevant. Historically, Shadow IT referred to unsanctioned hardware or software. Shadow AI is more dynamic: employees using generative AI tools, copilots, or external models without governance visibility.

Over-governance within formal systems often leads to under-governance outside established processes.

The false comfort of documentation

Many boards think having lots of policies means strong AI governance. But just having documents isn’t the same as managing AI risks.

Subscribe to our bi-weekly newsletter

Get the latest trends, insights, and strategies delivered straight to your inbox.

True compliance management in AI requires:

- Clear AI decision rights in enterprise structures.

- Defined accountability for model outcomes.

- Continuous monitoring, not one-time reviews.

- Measured AI risk mitigation strategies tied to business impact.

An AI governance plan that exists only on paper does not reduce risk and may create a false sense of security.

CTOs should consider whether their governance model enables responsible development or simply impedes progress.

The Compliance Myth: Why Over-governance in AI Is as risky as no governance

A risk-based approach that aligns with evolving regulations and security needs helps avoid both inaction and disorder.

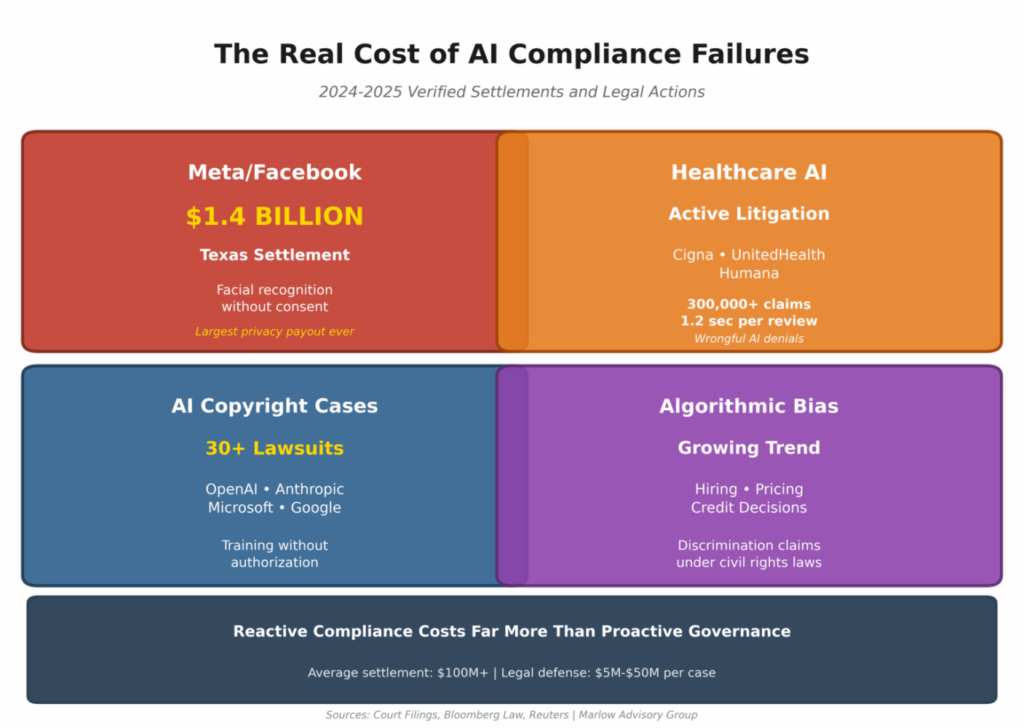

The dangers of weak AI governance are widely understood as bias, privacy violations, regulatory penalties, and reputational damage.

The risks of over-governance are less visible but just as significant:

- Loss of competitive edge.

- Slower product cycles.

- Talent frustration and attrition.

- Migration to unapproved tools.

- Reduced experimentation capacity.

Effective compliance risk management balances protection with enablement. It ensures that high-risk AI use cases receive rigorous oversight, while low-risk experimentation remains fluid and controlled.

For many CTOs, 2026 feels like a compliance arms race. New AI regulations. Board scrutiny. Audit committees are asking sharper questions. Investors demand evidence of control. In response, organizations double down on compliance risk management, more policies, more reviews, more gates.

But here’s the uncomfortable truth: in AI, over-governance can be as dangerous as no governance at all.

The problem isn’t compliance. The problem is how we misunderstand it. The following are common myths influencing enterprise approaches to AI compliance and risk management, which CTOs should address promptly.

Myth 1: More controls automatically reduce risk

It is a reassuring belief that adding layers of control will reduce exposure to AI-related uncertainty.

In practice, excessive controls often increase AI governance risk.

When approval cycles stretch from weeks to months, business units don’t stop innovating — they go around governance. Marketing teams adopt external AI tools. Engineers spin up open-source models in isolated environments. Operations teams automate workflows quietly.

This is the rise of shadow AI.

Unlike shadow IT, shadow AI is harder to detect. It lives in APIs, browser tools, plug-ins, and copilots. The stricter the gatekeeping, the more likely experimentation shifts underground, creating unmanaged shadow AI risks that compliance teams cannot see.

Effective governance, risk management, and compliance are not about maximum friction. It’s about structured visibility.

Myth 2: If it’s documented, it’s governed

Many companies now have well-designed AI policy documents and formal governance frameworks. But having documents isn’t the same as putting them into action.

There are organizations with detailed AI ethics statements that cannot answer:

- Where are our production models running?

- Who owns model retraining?

- What are our escalation paths if a model drifts?

- How are AI approval processes tracked?

A written framework does not equal operational governance.

True enterprise AI governance embeds controls into engineering workflows, such as model registries, automated logging, bias-testing pipelines, and version control. Governance frameworks in AI must live in systems, not slide decks.

Myth 3: Compliance is the legal team’s job

This myth can be especially expensive.

AI compliance isn’t just a regulatory issue; it’s also about how systems are built.

When AI systems influence pricing, hiring, fraud detection, or healthcare decisions, governance touches:

- Data architecture

- Model lifecycle management

- Security posture

- Incident response design

- Enterprise AI security integration

If compliance is managed only by legal or risk teams, technical considerations are often overlooked. CTOs must share responsibility for AI governance, as they oversee system development and usage.

Compliance that is not aligned with technology is ineffective. Conversely, technical innovation without compliance introduces significant risks.

Myth 4: Low-risk AI doesn’t need formal oversight

It’s just an internal productivity tool. Or, it’s only summarising documents. Or, it’s not customer-facing.

These assumptions often do not withstand closer scrutiny.

Internal AI systems can still expose:

- Sensitive data

- Intellectual property

- Biased outputs influencing decision-making

- Reputational exposure if leaked

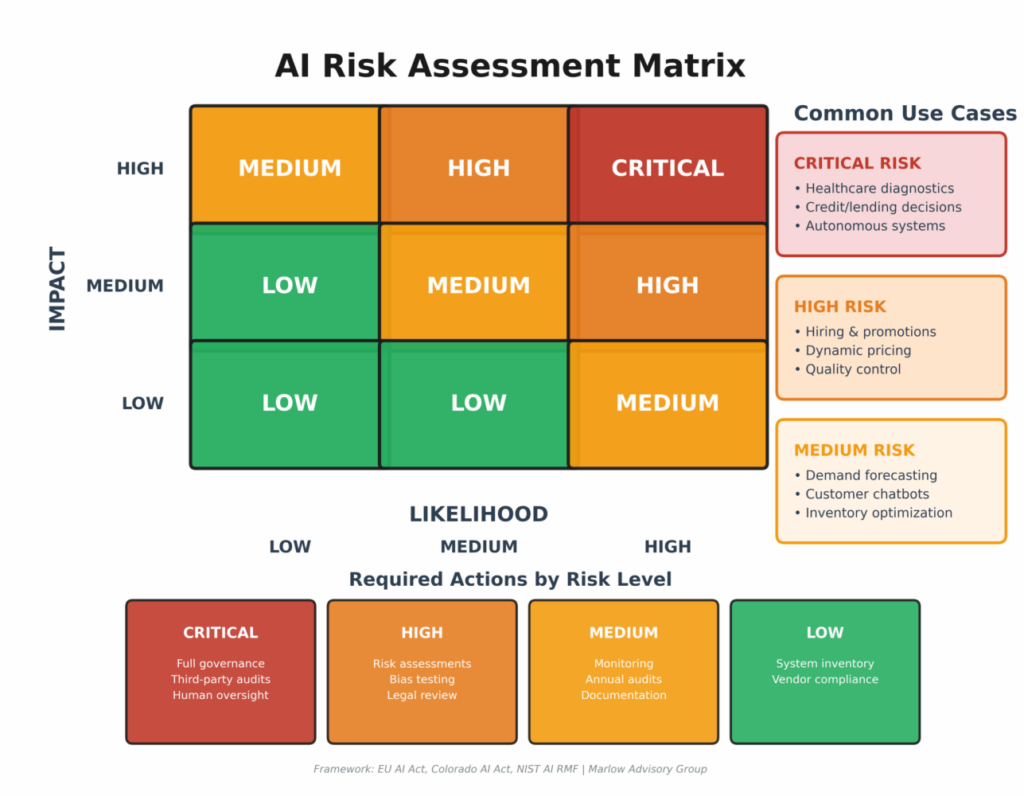

An effective AI compliance risk management uses a tiered approach. Not every tool needs the same level of review, but every AI system should be tracked in your compliance and risk management process.

Proportionate governance reduces friction while maintaining oversight.

Myth 5: Automation reduces compliance risk

AI-driven monitoring tools promise autonomous detection, remediation, and tuning. In database management, fraud detection, and cloud optimisation, AI can surface issues faster than any human team.

But finding issues isn’t the same as making decisions about them.

In highly regulated environments, timing matters as much as technical accuracy. An AI-recommended change during a reporting window may be technically correct but operationally dangerous.

Therefore, AI risk strategies should ensure that humans remain responsible for critical decisions.

Automation speeds up results, but it doesn’t remove the need for accountability.

Myth 6: Governance slows innovation

This is the most persistent myth and often generates the greatest resistance.

The belief that compliance always slows product development stems from outdated practices such as manual reviews, rigid checklists, and separate approval boards.

Modern AI governance should accelerate development.

When governance is embedded:

- Teams know the boundaries.

- Approval workflows are clear.

- Risk tiers are predefined.

- AI approval workflows are automated where appropriate.

Clarity accelerates innovation, while ambiguity hinders it.

The fastest-moving organizations are not the least governed by. They are the most structurally aligned.

Compliance risk management in AI and designing AI governance that scales

So, what do balanced governance frameworks and compliance risk management in AI look like in practice?

- First, classify AI systems by impact. Borrowing from emerging global approaches, including EU AI Act-style risk tiering, organizations should align controls to exposure levels. Not every chatbot needs the same scrutiny as an AI-driven credit model.

- Second, build governance into daily workflows. Rather than separate review steps, put AI compliance checks right into DevOps and MLOps pipelines. Automate monitoring, documentation, and audit trails whenever you can.

- Third, make ownership clear. AI governance falls apart when no one is accountable. CTOs should spell out who approves models, who checks for drift, and who is responsible if something goes wrong.

- Fourth, address shadow AI proactively. Rather than banning tools outright, provide sanctioned alternatives and clear usage guidance. Reducing friction lowers shadow AI risks.

Compliance risk management works best when it is predictable, transparent, and aligned with operational realities.

Geoffrey Marlow from Marlow Advisory Group shared on LinkedIn

Every AI system in your organization needs an owner. Someone responsible for its compliance, performance, and risk profile. This isn’t your Information Technology (IT) department’s job alone. Your AI governance committee should include representatives from legal, operations, Human Resources (HR), and executive leadership. When things go wrong (and eventually, something will), you need clear lines of accountability and decision-making authority.

For CTOs looking to operationalize AI governance and compliance risk management, Marlo has outlined a practical framework:

The hybrid model: Control without suffocation

In production environments, the most resilient model mirrors the intelligence-support approach.

AI systems can highlight issues, suggest improvements, and spot problems, but people still make the important decisions.

This structure strengthens governance risk management and compliance by ensuring:

- Automation accelerates insight, not unchecked action.

- High-impact decisions remain accountable.

- Audit trails reflect both system recommendations and human judgment.

- AI risk mitigation strategies are contextual, not mechanical.

Over time, this mix of human and AI decision-making builds trust in the organization. It lets AI governance grow without holding back innovation.

| Governance Layer | AI Role | Human Role | CTO Priority | Risk if Ignored |

|---|---|---|---|---|

| Monitoring & Detection | Continuous anomaly detection, drift alerts, policy flagging | Review flagged issues and validate severity | Implement real-time monitoring in MLOps pipelines | Silent model drift, regulatory blind spots |

| Decision Execution (Low Risk) | Auto-optimization within predefined thresholds | Define boundaries and escalation triggers | Hard-code risk tiers and auto-action limits | Over-automation leading to unintended impact |

| Decision Execution (High Risk) | Provide recommendations and risk scoring | Approve, reject, or modify actions | Establish mandatory human checkpoints | Liability exposure, compliance violations |

| Audit & Logging | Capture model inputs, outputs, and recommendations | Review overrides and sign-off trails | Ensure audit trails are tamper-proof and board-ready | Weak defensibility during audits |

| Model Lifecycle Management | Track performance metrics and retraining triggers | Approve retraining schedules and deployment | Align retraining governance with business risk | Model decay, performance instability |

| Incident Response | Detect abnormal behavior | Initiate rollback or shutdown | Define “kill switch” authority and escalation paths | Slow response during AI failure |

Compliance risk management: Executive takeaway for CTOs

In the AI era, compliance risk management isn’t about putting up more barriers. It’s about creating smarter systems.

Under-governance invites regulatory scrutiny and operational failure. Over-governance drives shadow AI, weakens agility, and creates hidden exposure.

The strategic objective is equilibrium:

- Proportionate AI governance risk controls.

- Embedded AI risk management across the lifecycle.

- Clear AI decision rights in enterprise structures.

- Adaptive frameworks that evolve with regulation and technology.

For CTOs, this is not a theoretical debate. It is a leadership decision.

AI will not wait for perfect policies. The organizations that win will be those that treat compliance and risk management not as a brake, but as an enabling architecture, one that protects the enterprise while allowing it to move at the speed of intelligent systems.

In brief

AI isn’t just another compliance issue. It increases both opportunities and risks. Too many rules might feel safe, but in fast-moving tech markets, too much friction pushes innovation out of sight. No AI governance is risky. Too much governance is inflexible. Smart governance adapts. For CTOs, the goal is clear: create compliance risk management that allows responsible speed, not ones that block progress.